One Week of Halcyon Reach

Ten days into building an EVE-clone-shaped MMO as a solo dev. What that actually looked like.

May 14, 2026

commit 0fa6eed

Date: Mon May 4 22:13:34 2026 -0700

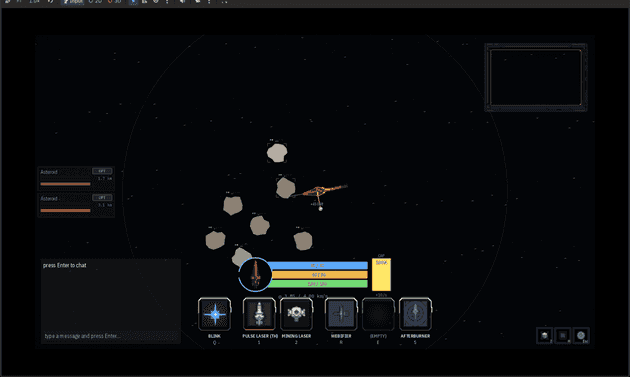

Initial Day One MVP: vector movement, real projectiles, lead-solver trackingThat was the first commit on my new game, ten days ago. I'm aiming for it to be an EVE Online clone with MOBA-shaped modules and a top-down isometric view, and I'm calling it Halcyon Reach.

I love EVE Online. I got engrossed in this world less by playing it and more by watching videos like Fredrik Knudsen's Down the Rabbit Hole, or by reading Andrew Groen's Empires of EVE, which is a real book documenting the history of a real, thriving player world. That's the thing about EVE: it's been running for more than twenty years, and over that time the players built a genuine history inside the game. That history is what makes EVE special, more than the gameplay itself.

What I don't love is the actual moment-to-moment gameplay. You orbit an NPC, you press one or two buttons, you go literally AFK. As much as I love so much about EVE, there's something about it that just keeps me from logging in. It's the lack of the kind of action-packed, exciting moment-to-moment combat that games like Dota 2 or CS2 have. I'd love for EVE to have something like that.

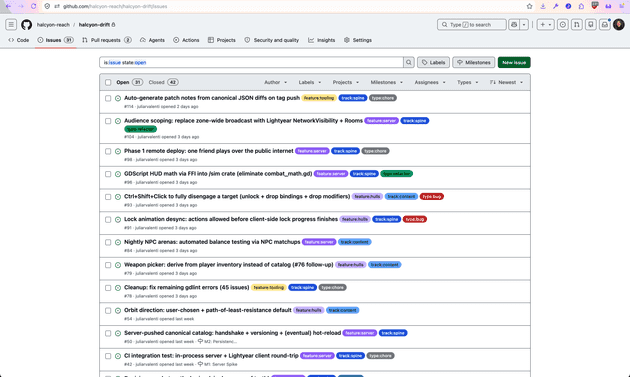

I'm only one person, and trying to tackle a giant massively-multiplayer game by myself is most likely a dumb idea. But I wanted to limit-test myself. How far could I take agentic development? Is it possible to solo-dev a project this ambitious? Ten days in, there's a headless Rust server with replicated ships, server-authoritative combat, 73 issues filed, 42 closed, thirteen design docs, and a Godot client running over the top.

Honestly, it's got a long, long way to go. I'm under no illusions about that. But I've learned more in these ten days than I have in months on most projects, and the development experience itself has been genuinely interesting to sit inside of, which is most of why I wanted to write any of this down.

The first day was just talking

I'm new to game dev, so I didn't write any code on day one. I opened a Claude Desktop session and we sketched the rough idea, free-form, just chatting about what the game is supposed to be. After a while, once it started to feel like something real, I asked the agent to switch modes and start asking me questions back instead of just absorbing them.

It looked like this. I'd say "okay, I want to focus on combat next." It would come back with: what kinds of weapons should be available? how many skillshots? are there fixed ammo counts? do modules draw capacitor like in EVE? how does damage type work? I'd answer, and the answers would force me to commit to specifics I hadn't actually thought through. A lot of what I came in believing turned out to be vibes or nostalgia, and the only way I found that out was being made to defend it out loud.

As the conversation built up, we collected the answers into DESIGN.md, a single unified design bible to drive the rest of the game. It's the foundation. Maybe a wobbly one made out of sticks, but it's something.

The hope is that this doc stays coherent enough to chisel down into something that makes sense as the game actually gets built.

Picking the stack

I knew going in that I wanted to avoid the kinds of problems EVE has at scale. EVE is famous for TiDi, short for time dilation, a system where the server gets so overloaded by player and module interactions that the entire game has to slow down to keep up. Historically that's meant large-scale battles in EVE are battles of patience as much as battles of player skill. Not really the feeling I want to recreate.

So I knew I needed a language that was fast, strong, and typesafe. I'm not going to be reading most of the code the agent writes, and I needed enough compiler-enforced guardrails that what the agent is generating isn't regressive slop sneaking past me. That's why I landed on Rust. I wrote an article a while back about why I think Rust is uniquely suited to AI-generated code if you want the longer version, but the short version is: performance in the C++ ballpark, plus a compiler that does real work validating what came out of the model.

That, combined with wanting a strong ECS, pointed cleanly at Bevy and Lightyear, which obviously meant a language boundary between the Godot client and the Rust server. That brought a whole host of challenges with it: two languages that have to talk to each other across a glue layer, two type systems to keep in sync, two build pipelines to wire together. But I think in the end it'll be worth it.

I had never written Bevy or Lightyear before this project. One night I sat down with the agent for a long session and walked through the basics, where each library fits in the stack, how the dual integration between Godot and Bevy actually works. I'm not going to read the Bevy source. What I will do is build enough of a mental model that I can tell when something the agent generated doesn't belong.

From there I started writing more documents alongside the agent. ARCHITECTURE.md for the basic server-to-client structure. HULLS.md for the base set of ships at launch. MODULES-AND-FITTING.md for how equipment works. Each one is a baseline spec, a northstar bible the agent can pull from any time it needs to know how the project actually wants to be built. The more I write down, the less the agent drifts, because every rule I codify is context the next session inherits for free.

The combat problem I only found by playing

I came out of the design conversations confident that I wanted dodgeable projectiles. EVE's hit-roll-at-fire-time tracking is part of what gives the genre its strategic feel, but I wanted something more tactile, where physically moving your ship out of a shot actually mattered.

A demo I had running in a couple of hours proved it wrong. The combo of EVE-style module activation (click to fire, no aim) plus a player who can freely steer meant the faster ship was effectively invincible. I sat in the demo flying a fast frigate and there was, functionally, nothing the attacker could do. The fight wasn't a fight, it was a chase the attacker would always lose. The fix, which EVE figured out a long time ago, is that the hit/miss outcome has to be decided at the moment of fire. The projectile that flies out of your gun is visual theater.

I had a strong intuition that was wrong. In a normal design process I would have happily defended dodgeable projectiles for weeks. Instead I got a playable demo in a few hours and watched my own design fail immediately.

Delegating to narrow agents

I knew from the start I'd need issue tracking, so I set it up early. By day three I was filing more than I could close, and it was clear the bottleneck wasn't going to be having a tracker, it was going to be keeping it clean enough to be useful.

So I built a project-manager subagent whose entire job is creating tickets. I describe a thing I noticed, it grounds the description against the current code and docs, searches the tracker for duplicates, and files a clean, well-formed ticket. That's it. It doesn't plan the work. It doesn't write the fix. It doesn't open PRs. It just turns my noticing into tracker entries that future-me (or another agent) can actually act on.

The whole reason it's focused is so it has a clear pattern to follow and can replicate that pattern reliably. Every ticket comes out shaped the same way, against the same docs, deduped the same way.

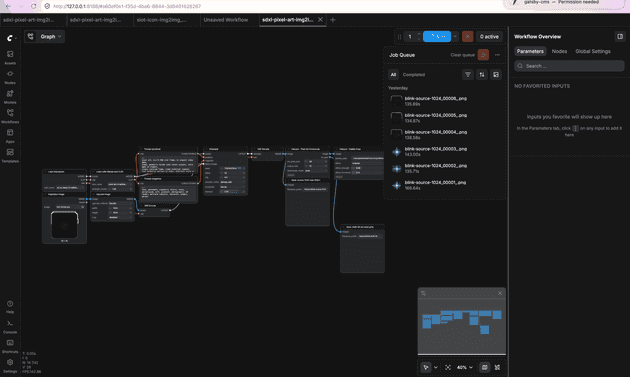

The art problem, currently in flight

I am not an artist.

I considered doing art by hand. After drawing a few UI elements I realized it just isn't scalable, and my art skills are not going to do this game any justice.

So I decided to stay in my lane and ship a custom generative art pipeline in ComfyUI. It lets me build an opinionated flow that keeps the aesthetic at least somewhat sane, while still being better than static templates, placeholders, or asset packs. A local model, a consistent workflow, palette snapping at the output stage, version-controlled prompts.

The goal is to push visual fidelity to "polished enough that I can honestly evaluate combat feel," without spending six months learning to draw. That ceiling has gotten meaningfully higher in the last year. I'm trying to find out how high.

Audio is the same kind of problem. I'm experimenting with hooking Claude Code up to the ElevenLabs MCP so the agent can generate sound effects and short music pieces directly into the project as it needs them. Early days, but the same idea: stay in my lane, lean on a tool that's actually good at the thing I'm not.

Where this goes

I don't know if this game ships. The full-loot PvP graveyard is large and I am one person. What I know is that ten days in there's a spine, the spine is the part I was most worried about, and I've learned more about how to actually work with AI agents on a project this size in those ten days than I had in months of using them at my day job.

I'm also just really enjoying it. I'm finally getting to use Rust on something real, and learning a new set of systems on a real project has been a great kind of challenge. Game dev with ECS is a completely different beast than what I do in my day job, and the performance and coding challenges it's offering have been a genuine pleasure to sit inside of.